Every cloud governance team runs tag cleanups. Every cloud governance team runs them again the following month. The backlog never reaches zero. The unallocated cost line in the monthly report never disappears. The cleanup is not the problem. The problem is that cleanup is the strategy.

Reactive tagging is structurally broken. Resources deploy untagged, they sit in the billing ledger as unattributed spend, someone runs a tagging sweep, and 48 hours later the next deployment creates a new untagged resource. You are cleaning a floor while the pipe is still leaking.

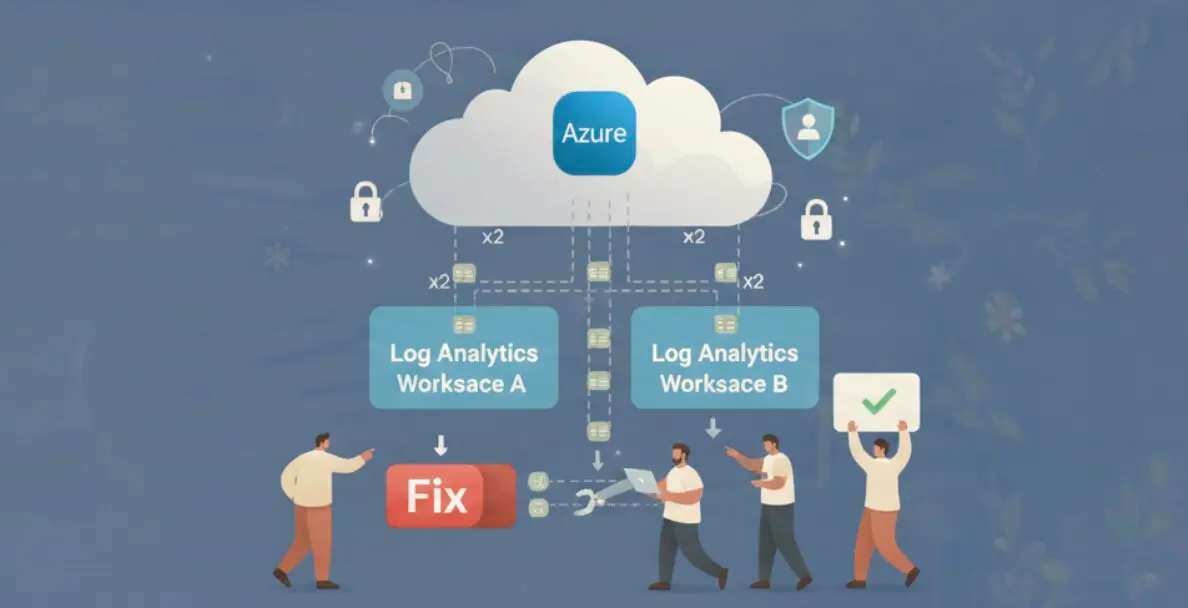

The fix is moving tag enforcement to the point where a resource first becomes known to your governance system: discovery time. Not deployment time, where enforcement blocks pipelines. Not cost review time, where the damage is already done. Discovery time: the window between when a resource is created and when it generates its first unattributed billing entry.

Why Tag Cleanup Is a Loop You Cannot Win

The math on reactive tagging makes the failure mode obvious. An organization deploying 50 new resources per day with a weekly tagging sweep accumulates 350 untagged resources before the sweep runs. The sweep runs, clears the backlog to near-zero, and the cycle restarts. The 350 resources that were untagged for up to 7 days generated a week of unallocated billing cost that cannot be retroactively attributed.

Scale that up. A mid-size engineering organization running 200 deployments per day has 1,400 untagged resources entering the estate each week. Organizations at this scale that rely on sweeps report 23-35% of cloud spend as unallocated in monthly reports, because deployment velocity outpaces enforcement cadence. Building a tagging strategy that actually sticks requires shifting the enforcement point, not improving the cleanup frequency.

The secondary failure of cleanup-based tagging is attribution accuracy. A tag applied three weeks after resource creation reflects what the resource is now, not what it was when it generated cost. If an instance moved from a staging environment to production before the sweep ran, the cost from its staging period is permanently misattributed — if it gets attributed at all.

Why Enforcement at Deployment Breaks Without a Complete Taxonomy

AWS Config, Azure Policy, and GCP Organization Policy all support tag enforcement at resource creation time. The mechanism is straightforward: define required tags, and the policy engine blocks any resource that deploys without them.

This works when every team has a fully defined tag taxonomy encoded in their IaC modules. It breaks for everyone else.

An engineer deploying a new VPC for a compliance test via the AWS console does not have a tag module imported. The policy blocks the deployment. The engineer either escalates for an exception, adds placeholder tags to pass validation, or finds a workaround. The outcome is worse than no enforcement: tags that exist but carry wrong values, or a governance exception that creates a permanent carve-out.

| Enforcement Approach | Compliance Rate | Latency to Tagged | Failure Mode |

|---|---|---|---|

| Manual sweep (weekly) | Low (35-65%) | 3-7 days | Backlog grows faster than sweeps run |

| IaC module enforcement | High for IaC deployments, 0% for console | At deployment | Console deployments entirely uncovered |

| Policy block at creation | High for covered resources | At deployment | Failed deployments without full taxonomy; exceptions proliferate |

| Discovery-time suggestion | High, improves over time | Minutes to hours | Requires human review; suggestions need acceptance |

The key distinction in the last row is that discovery-time suggestion does not block anything. It creates a governance workflow that operates parallel to the deployment pipeline. A resource deploys, the governance system discovers it, generates a tag suggestion based on account context and resource metadata, and queues it for review. The deployment succeeds regardless of tag state. The tagging happens through the governance workflow rather than as a deployment gate.

Discovery Time Is the Right Enforcement Point

When a resource is created in AWS or Azure, the cloud provider emits an event. AWS EventBridge captures RunInstances, CreateBucket, CreateVpc, and hundreds of other creation events within seconds of the API call. Azure Event Grid delivers resource creation events with similar latency. A governance system subscribed to these event streams can discover a new resource within 2-5 minutes of its creation.

That 2-5 minute window is the correct enforcement point. The resource exists and is discoverable. It has not yet generated any billing. It has not yet appeared in a cost report as unallocated spend. A tag suggestion generated at this point and accepted within the same business day means the resource carries correct tags before its first full billing cycle closes.

Compare this to cleanup: a resource discovered at T+5 minutes and tagged within hours versus a resource discovered at monthly cost review and tagged at T+30 days. In the second scenario, 30 days of cost is permanently unattributed regardless of how accurate the eventual tag is.

Auto Tagging: The Pending Review, Accepted, Rejected Workflow

zopnight’s Auto Tagging feature is built on the discovery-time model. When a resource is discovered across AWS or Azure, the system generates tag suggestions immediately. Suggestions are based on resource metadata (account, region, resource type, existing tags on adjacent resources) and existing patterns in the organization’s tagging history.

The suggestions enter a three-tab workflow under the Insights section: Pending Review, Accepted, and Rejected.

Pending Review contains every suggestion that has not yet been acted on. This is the daily work queue for a platform team doing tag governance. Filter by provider, review the suggested tags for accuracy, and accept or reject. A platform team spending 10 minutes per day on the Pending Review tab can process a 200-resource/day deployment pace with no backlog accumulation.

Accepted contains all suggestions that have been approved and applied. This is the audit trail: every resource that received tags through Auto Tagging, with the original suggestion, the accepted tags, and the timestamp. Compliance reporting draws from this tab.

Rejected tracks suggestions that were declined. Tracking rejections is as important as tracking acceptances. A suggestion that is rejected consistently for a specific resource type indicates a gap in the suggestion model. Rejections also prevent the same suggestion from re-surfacing on the next discovery cycle.

The workflow is operational, not ceremonial. It does not require all-hands tagging sprints or quarterly cleanup projects. It is a standing queue that platform teams work incrementally, at the cadence that matches their deployment velocity.

Measuring Tag Governance by Spend Coverage, Not Resource Count

Most tagging compliance metrics count resources: percentage of resources with required tags. This metric systematically understates the governance gap for high-cost resources.

A single untagged p3.16xlarge GPU instance on AWS costs $24/hour. 50 untagged t3.micro instances cost $0.05/hour each, totaling $2.50/hour. By resource count, that estate has 1 compliance violation out of 51 resources — a 98% compliance rate. By spend coverage, 91% of the compute cost is unattributed because the one expensive untagged instance dominates the spend.

| Organization | Tagged Resources | Total Resources | Resource Compliance | Tagged Spend | Total Spend | Spend Compliance |

|---|---|---|---|---|---|---|

| Org A | 490/500 | 500 | 98% | $4,200/mo | $18,000/mo | 23% |

| Org B | 380/500 | 500 | 76% | $17,100/mo | $18,000/mo | 95% |

Org A has better resource-count compliance but nearly all of its unallocated spend is concentrated in 10 high-cost resources. Org B has tagged fewer resources by count, but they happen to be the expensive ones.

Discovery-time tagging addresses this correctly because it generates suggestions for every resource at creation, regardless of size or cost. A GPU instance that deploys untagged generates a Pending Review entry within minutes, the same as a t3.micro. The governance workflow does not differentiate by resource cost. But when suggestions are prioritized by estimated cost impact, high-cost resources surface first in the review queue.

Track tagging compliance as percentage of monthly spend covered by correct tags, not percentage of resources tagged. That number is what FinOps programs are actually trying to improve, and it is the number that discovery-time enforcement moves most effectively. SOC 2 compliance on cloud infrastructure requires the same spend-coverage discipline: auditors check whether controls apply to everything, not whether they apply to most things.