Most Kubernetes teams configure HPA, set a CPU threshold of 70%, and call it done. That works for stateless web services. It fails for everything else.

Queue workers, batch processors, async API consumers, and event-driven microservices don’t scale well on CPU. Their bottleneck is message backlog, not computation. KEDA fixes this by letting you scale on the signal that actually matters.

Why CPU Is the Wrong Signal for Most Workloads

Consider a Kafka consumer group processing order events. Messages arrive. Workers poll, deserialize, call a database, and write a result. The CPU load per message is low. The time spent waiting for I/O is high.

Now the topic partition fills up. 10,000 messages are waiting. Your workers are running at 5% CPU because they spend most of their time in the network. The HPA sees 5% CPU and does nothing. Queue lag builds for minutes before the CPU finally spikes enough to trigger a scale event.

| Signal | Value |

|---|---|

| Queue depth | 10,000 messages |

| CPU | 5% |

| HPA action | none |

| User-visible latency | climbing |

This is the core problem. CPU is a lagging indicator for queue-based workloads. By the time it rises enough to trigger HPA, you are already behind. And scale-out takes another 60 to 90 seconds to bring pods online. The latency spike has already happened.

The inverse problem is over-provisioning. Teams that understand this delay keep their worker fleets large enough to absorb bursts. They set minReplicas: 5 and leave it there. Workers sit idle 80% of the day, burning compute budget for headroom they rarely use.

Figure: The gap between lagging builds and scale events is where latency accumulates

What KEDA Does and How It Fits Into Kubernetes

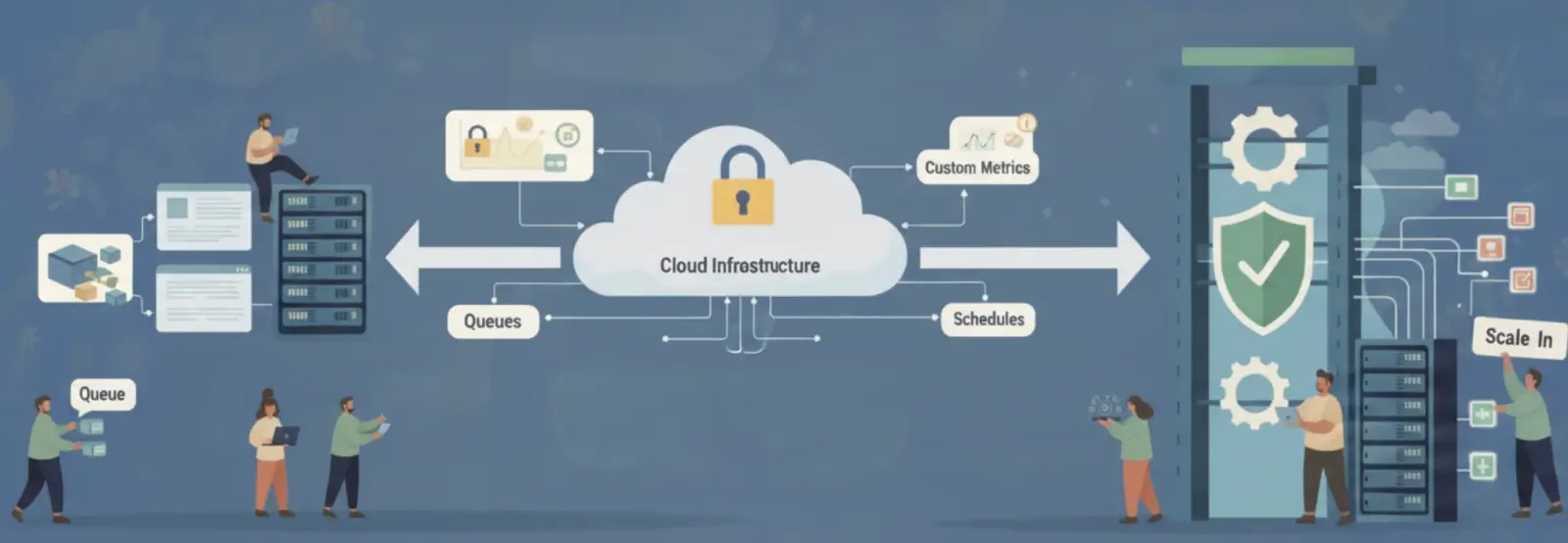

KEDA (Kubernetes Event-Driven Autoscaling) is a CNCF graduated project. It does not replace HPA. It extends it.

When you install KEDA, it adds a ScaledObject custom resource to your cluster. A ScaledObject connects a Deployment (or StatefulSet, Job, etc.) to an external event source. KEDA polls that source and sets the desiredReplicas on the underlying HPA based on current event volume.

The key difference: KEDA can set desiredReplicas to zero. Native HPA cannot. Minimum is 1.

Figure: KEDA polls external event sources and feeds metrics to HPA, which adjusts desired replicas to scale the pod fleet

KEDA ships with over 60 built-in scalers. The most commonly used in Kubernetes cost optimization are SQS, Kafka, RabbitMQ, Prometheus, and Cron. Each scaler exposes a specific numeric signal that becomes the scaling input.

Scaling on Queue Depth: SQS, RabbitMQ, and Kafka Consumer Lag

Each queue system exposes a different leading indicator.

| Scaler | Metric Used | Scaling Unit | Scale to Zero | Typical Use Case |

|---|---|---|---|---|

| AWS SQS | Messages visible | Messages per pod | Yes | Async job queues, email pipelines |

| Kafka | Consumer group lag | Messages per pod | Yes | Stream processing, event pipelines |

| RabbitMQ | Queue depth | Messages per pod | Yes | Task queues, microservice fanout |

| Azure Service Bus | Active message count | Messages per pod | Yes | Azure-native async workflows |

| Redis Lists | List length | Items per pod | Yes | Lightweight task queues |

The SQS scaler is the most common starting point. You configure a targetQueueLength — the number of messages each pod should handle. KEDA calculates desiredReplicas as ceil(currentMessages / targetQueueLength).

If your queue has 500 messages and targetQueueLength is 100, KEDA sets 5 replicas. Queue drains to 0, KEDA sets 0 replicas. No messages, no pods.

Figure: KEDA SQS scaler derives replica count from queue depth and scales workers down to zero when no messages are pending

The Kafka scaler works differently. It scales on consumer group lag, not raw message count. Lag is the difference between the latest offset on the topic and the offset your consumer group has committed. A lag of 50,000 across 5 partitions means your consumers are 50,000 messages behind.

This matters because Kafka lag is a more precise signal than partition count. A consumer at offset 100 on a topic at offset 100 is not behind, even if messages are arriving. A consumer at offset 100 on a topic at offset 100,050 needs more workers, not fewer.

KEDA divides total lag by your lagThreshold to calculate replica count. A lag of 10,000 with lagThreshold: 1000 gives you 10 replicas.

Cron-Based Scaling: Pre-Warming Before the Spike

Reactive scaling has an unavoidable delay. KEDA detects rising queue depth, creates pods, and the pods take 30 to 90 seconds to become ready (image pull, container startup, application initialization). For workloads with predictable load patterns, that delay is unnecessary.

The KEDA cron scaler adds a minimum replica count on a schedule. You define a time window, a timezone, and a replica count. Outside the window, KEDA falls back to the queue-depth scaler (or zero). Inside the window, KEDA holds the minimum at your specified count regardless of queue depth.

A practical setup: the cron scaler ensures 3 replicas are running by 7:55 am. At 8:00 am, traffic arrives, and HPA scales out from 3 to however many are needed. Users never experience the cold-start latency. At 6:00 pm, the cron window closes, HPA scales in, and overnight the queue scaler brings the fleet to zero.

The cost of pre-warming 3 pods for 5 minutes is negligible. The cost of slow response during business hours starts, and the over-provisioning needed to avoid it without scheduling is not.

Custom Metrics via Prometheus: Scaling on What Actually Matters

Not every workload maps cleanly to a queue. Some systems have an internal processing state that better represents the current load. A video transcoding service might scale on active job count, not CPU. An API gateway might scale on connection backlog, not requests per second.

KEDA’s Prometheus scaler lets you use any Prometheus metric as a scaling signal. You write a PromQL query. KEDA evaluates it on an interval. The result drives the replica count.

Figure: KEDA evaluates a PromQL query on a schedule and scales worker pods based on application-defined metrics

Example signals that work well here:

active_transcoding_jobs— scale video workers on actual job countredis_list_length{list="processing_queue"}— scale workers when Redis queue fillshttp_requests_in_flight— scale API handlers on concurrent in-flight requestspg_stat_activity_count— scale database-heavy workers on active DB connections

The advantage: you are scaling on a metric your application owns. You control its granularity, its meaning, and its thresholds. CPU and memory are infrastructure proxies. A Prometheus metric can be a direct measure of work.

One caveat: Prometheus-based scaling adds a dependency chain. If Prometheus scrape fails, KEDA cannot evaluate the query. Set metricType: AverageValue rather than Value so a query failure does not trigger a runaway scale event.

The Cost Case: Scale-to-Zero vs Always-On Minimum Replicas

The financial argument for KEDA is straightforward for batch workloads.

Consider a batch image processing service: 5 worker pods, each requiring 1 vCPU and 2 GiB memory. The workload runs in bursts during business hours and is completely idle overnight and on weekends.

| Configuration | Hours Running Per Week | Node Cost (m5.large at $0.096/hr) | Monthly Cost |

|---|---|---|---|

| HPA, minReplicas=5 | 168 hours (always on) | 5 nodes x $0.096 x 730 hrs | $350 |

| KEDA, scale-to-zero | 45 hours (business hours only) | 5 nodes x $0.096 x 195 hrs | $94 |

That is a 73% reduction in worker fleet cost with no change to processing throughput. The pods exist when work exists. They disappear when there is nothing to do.

Non-production environments compound this further. A staging cluster running these workers for testing purposes runs zero real workload overnight. With always-on HPA, you pay for idle staging workers 24 hours a day. With KEDA scale-to-zero, staging workers cost nothing outside of active test runs.

KEDA makes scale-to-zero a first-class primitive. The default changes from “always on unless proven otherwise” to “off unless there is work to do.”

Where This Breaks Down

KEDA is not universally the right choice. Know the failure conditions.

Long pod startup times break scale-from-zero. If your application takes 3 minutes to initialize, scale-to-zero creates a 3-minute response delay when the first message arrives. Pre-warming with the cron scaler addresses predictable patterns. Unpredictable bursts still incur that startup cost.

Scale-to-zero creates thundering herd risk. If 10,000 messages land simultaneously and you have zero pods, KEDA will create pods as fast as Kubernetes allows. Node provisioning may lag behind demand. Design your queue producers to handle back-pressure.

Prometheus scaler accuracy depends on the scrape interval. If your Prometheus scrape interval is 60 seconds and KEDA polls every 30 seconds, you may act on a metric that is already 90 seconds stale. Tune both to match your required responsiveness.

KEDA and Karpenter interact. If you use Karpenter for node autoscaling, scale-from-zero in KEDA requests pods that have no nodes to run on. Karpenter must provision a node before pods become ready. Factor in node provisioning time (typically 60 to 90 seconds on EKS) when evaluating whether scale-to-zero is appropriate for your latency requirements.

CPU scaling is simple. It is built in. It works for stateless web services with synchronous request patterns. For everything async, event-driven, or batch-oriented, the signal is wrong and the cost of that mismatch is real. KEDA gives you the right signal. The operational overhead is low, and the cost reduction is measurable.